The AI Tipping Point: Why 2026 Is the Year Software Stopped Waiting for Instructions

TL;DR

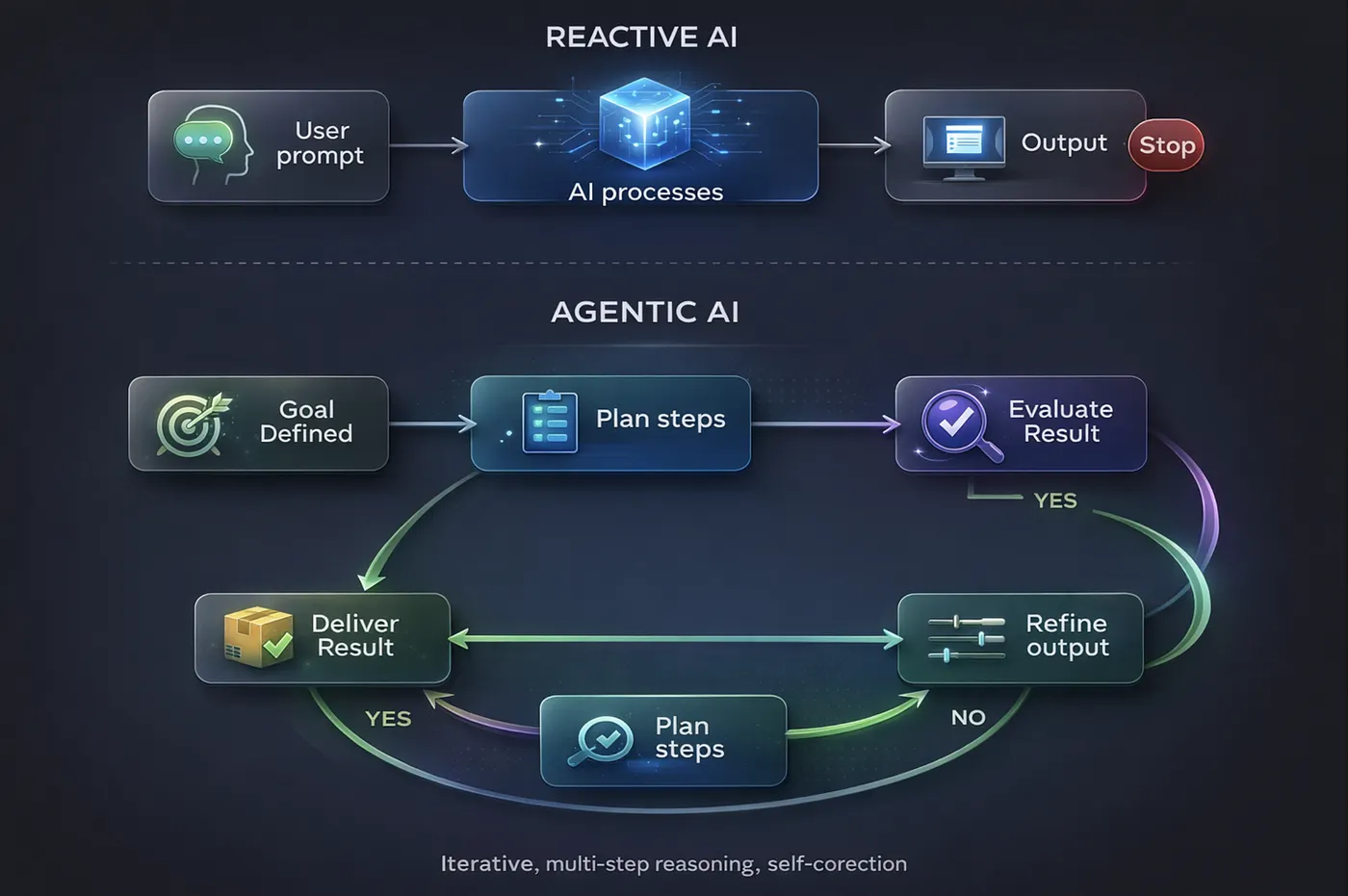

- AI in 2026 has shifted from reactive (prompt → response) to agentic systems now chain decisions, carry context forward, and act on goals without step-by-step instructions.

- This changes everything downstream: infrastructure costs, workforce dynamics, security models, and how companies choose a software development partner.

- The physical cost of AI is becoming a societal issue energy, cooling, and data center demands are scaling faster than most organizations anticipated.

- For software teams whether in-house or working with a dedicated development team the question is no longer if AI will reshape your workflow, but how fast you adapt.

The Moment Software Changed Its Nature

For decades, software behaved in a predictable way. It waited.

No matter how complex the system whether an operating system, a web application, or a machine learning model it remained fundamentally passive. It required input, followed instructions, and produced output. Even the most advanced automation systems were, at their core, chains of predefined logic.

Artificial intelligence initially followed the same pattern. It felt revolutionary because of what it could produce, but structurally, it was still reactive. You asked a question, it generated an answer. You gave it a task, it executed within boundaries you defined.

That mental model is now collapsing.

In 2026, something deeper is happening not just an improvement in capability, but a shift in behavior. AI systems are no longer confined to responding. They are beginning to operate in loops of reasoning and action, where each output becomes the input for the next decision. As a custom software development company working with AI daily, we’ve watched this transition move from research papers to production systems in under a year.

Software is no longer waiting. It is moving. And once it starts interpreting goals instead of executing commands, the entire relationship between humans and technology changes.

From Responses to Decisions: Understanding the Shift

Earlier AI systems were bounded by a single interaction. A prompt was given, a response was generated, and the system stopped. Any further action required another prompt. The intelligence existed, but it was locked inside isolated exchanges.

What is emerging now is something closer to continuity.

Instead of answering a question once, AI systems are being designed to carry context forward, evaluate their own outputs, and determine what needs to happen next. This introduces a chain of reasoning that extends well beyond a single step.

Consider a practical example. Ask a system to “prepare a market analysis.” Previously, the system would generate a static document based on whatever information it had access to. Today, a more advanced system interprets that request as a multi-stage objective. It identifies what data is needed, retrieves it from multiple sources, compares trends, generates an initial report, evaluates its completeness, refines weak sections, and delivers the result.

At no point does the user explicitly define each step. The system fills in the gaps.

This is not just automation it is decision layering, where each action is shaped by the outcome of the previous one. The system is not simply executing. It is navigating. And navigation requires something fundamentally different from execution: judgment.

For anyone building software today whether you’re a SaaS development company or managing a cross-platform app development project in Flutter this shift in AI capability directly impacts how you architect, plan, and deliver.

When Software Starts to Coordinate Itself

Once systems gain the ability to operate across multiple steps, a new challenge emerges: specialization.

A single system trying to handle everything from data retrieval to analysis to communication quickly becomes inefficient. This has led to architectures where multiple AI systems, each focused on a specific function, work together.

What makes this interesting is not just the division of labor, but how coordination happens.

In traditional systems, coordination is explicitly programmed. Developers define how components interact, what data flows between them, and how errors are handled. In newer AI-driven systems, coordination is increasingly dynamic. One system produces an output that another system interprets not through rigid schemas but through contextual understanding.

The interaction becomes less about structured data exchange and more about semantic alignment systems understanding each other’s intent rather than just their format. This creates a network of interactions that resembles collaboration more than execution.

But this introduces fragility. When coordination is no longer strictly defined, failures become harder to trace. If one part of the system behaves unexpectedly, the effects cascade in ways that are difficult to predict. Debugging shifts from analyzing code paths to interpreting behavior patterns.

As systems become more intelligent, they also become less transparent. This is something we think about constantly as a team building AI-powered solutions every layer of intelligence we add is a layer of predictability we trade away.

The Hidden Layer: Why AI Is Now a Physical Problem

It is easy to think of AI as something abstract algorithms, models, code.

But beneath that abstraction lies a very real and increasingly constrained layer: physical infrastructure.

Every AI operation requires computation. Computation requires hardware. That hardware consumes electricity, generates heat, and depends on large-scale facilities to operate. As AI systems grow more complex and more widely used, the demand for this infrastructure increases dramatically.

This is not linear growth. The computational requirements of modern AI systems scale in ways that outpace traditional expectations. According to the International Energy Agency, data center electricity consumption is projected to more than double between 2024 and 2030, with AI workloads driving a significant share of that increase.

At a certain scale, this stops being a technical concern and becomes a societal one. Communities feel the impact through energy prices and resource allocation. Governments intervene not to control technology, but because the infrastructure supporting it affects broader systems.

AI is no longer just code running somewhere. It is a physical presence with measurable impact.

Why Regulation Is No Longer Optional

As AI systems become more capable and more integrated into everyday processes, the risks expand in lockstep.

Earlier concerns about AI were mostly theoretical bias in models, potential misuse, ethical edge cases. In 2026, these concerns are becoming operational realities.

AI systems are now involved in generating information at scale, influencing decision-making in finance and healthcare, and interacting directly with users in critical contexts. Misinformation is no longer limited to human-generated content. Automated systems can produce and distribute content at volumes that are difficult to monitor. Decision systems powered by AI can influence outcomes that affect real lives.

Governments are stepping in not simply to restrict innovation, but to establish boundaries that ensure stability. The EU AI Act’s risk-based classification system, which began enforcement in phases from 2025, is one of the most visible examples. Similar frameworks are emerging across Asia and the Americas.

Regulation, in this context, is an attempt to define responsibility. Who is accountable when an AI system makes a harmful decision? How should transparency be enforced when systems become too complex to fully explain? What safeguards are necessary when systems operate autonomously?

These are not technical questions alone. They are legal and ethical questions that require structured answers. For enterprise software development companies and any organization deploying AI at scale, understanding this regulatory landscape is no longer optional it is a prerequisite for operating responsibly.

The Workforce Is Not Disappearing It Is Being Reshaped

One of the most immediate and visible effects of AI in software development in 2026 is its impact on work and the common narrative gets it wrong.

AI does not remove entire roles overnight. It targets specific types of tasks particularly those that are repetitive, predictable, or easily structured. When those tasks are automated, the nature of the role changes.

Consider a developer who previously spent significant time writing boilerplate code. With AI assistance, that portion of the work is reduced. The developer’s role shifts toward system design, architecture, and problem-solving the parts that actually require human judgment.

Similarly, in content creation, customer support, and QA, AI handles routine outputs, allowing humans to focus on complex or nuanced interactions.

This creates a compression effect. The same output can be achieved with fewer hours, not because the work disappears, but because each individual becomes more productive. At the same time, new roles emerge focused on guiding, validating, and managing AI systems.

The challenge is not the disappearance of jobs, but the transition between them. Skills that were valuable in one context become less relevant, while new skills centered around understanding and supervising AI become essential. Whether you’re managing a remote development team or hiring your first offshore software development partner in Bangladesh, this skill shift should be at the top of your evaluation criteria.

When AI Becomes Infrastructure

Perhaps the most significant shift in 2026 is not in capability, but in positioning.

AI is no longer treated as an experimental feature. It is becoming infrastructure.

In earlier stages, companies approached AI cautiously. It was introduced through pilot projects or isolated features, often without clear expectations of return. Success was measured in novelty rather than impact.

That has changed. Organizations are integrating AI directly into core systems into workflows, decision-making processes, and customer interactions. The reason is straightforward: the value is measurable.

AI reduces time spent on repetitive tasks. It increases throughput. It enables new forms of analysis that were previously impractical.

Once a technology reaches the point where its value can be quantified reliably, it transitions from optional to essential. This is the stage AI has reached. For a SaaS development company or any team evaluating the cost to build a mobile app in 2026, AI tooling is no longer a line item you debate it’s assumed.

The Shift Toward Efficiency

For a long time, progress in AI was driven by scale. Larger models, more data, more compute these were the primary levers of advancement.

But this approach has limits.

As systems grow, the cost of maintaining and operating them increases. Infrastructure demands become heavier. Energy consumption rises. At some point, scaling further becomes inefficient.

This is why the industry focus is shifting toward efficiency achieving the same or better results with fewer resources. This involves optimizing model architectures, improving algorithms, and designing systems that use compute more intelligently.

We see this reflected in the frameworks we work with daily. The conversation around cross-platform app development with Flutter, for instance, has always been about efficiency one codebase, multiple platforms. The same principle now applies to AI: the teams that win are not necessarily the ones with the biggest models, but the ones using their tools most intelligently.

Early stages of any technology prioritize expansion. Later stages prioritize optimization. AI is entering that second phase. And for software development outsourcing teams in Dhaka and elsewhere, this efficiency mindset is what turns a good development partner into a great one.

Security in an Intelligent System

As AI systems gain the ability to act and adapt, they introduce new categories of risk.

An AI-driven system can generate new strategies dynamically. It can adapt its behavior based on feedback. This applies both to defensive systems and to potential attackers.

Security becomes a continuous process of adaptation. Defensive systems must evolve in real time to counter threats that are themselves evolving. This leads to an environment where both sides defense and attack are powered by increasingly sophisticated AI systems.

The result is not just an increase in capability, but an increase in uncertainty. For any enterprise software development company handling sensitive client data, this means security can no longer be a checklist it has to be a living, continuously updated practice.

What This Means If You’re Building Software Today

When viewed individually, each of these changes agentic systems, infrastructure pressure, regulation, workforce shifts may seem manageable.

Together, they represent something larger: a transformation not just in technology, but in how systems operate.

AI is no longer a layer added on top of existing systems. It is becoming part of the foundation. And when a technology reaches that level, the conversation changes. It is no longer about what the technology can do. It is about how we build with it, govern it, and live alongside it.

The defining characteristic of this moment is not that AI has become more powerful. It is that it has become more present. And presence, more than power, is what changes the world.

What is your team doing differently in 2026 because of these shifts? We’d love to hear especially from other development teams navigating this transition in real time.

Junior Software Engineer

A software engineer focused on solving real-world problems through practical engineering, AI-driven development, and scalable systems.

Comments

Read Next

The Axios Hack of 2026: How a Trusted npm Library Quietly Became a Backdoor Into Thousands of Apps

A stolen npm token. A hidden post-install script. 45 million weekly downloads turned into an attack surface. The Axios supply chain hack of March 2026 was invisible, precise, and terrifying. We break down the 6-step attack chain, the blast radius, and what every dev team should change today.

Logistics Management: The Invisible System Powering Global Commerce

Most logistics companies don't have a technology problem. They have a visibility problem. We've spent two years building a logistics platform that now handles 2,000+ stops per day. This isn't textbook theory it's what we learned about fleet visibility, route profitability, automated invoicing, and why most operations still run on WhatsApp and Excel.

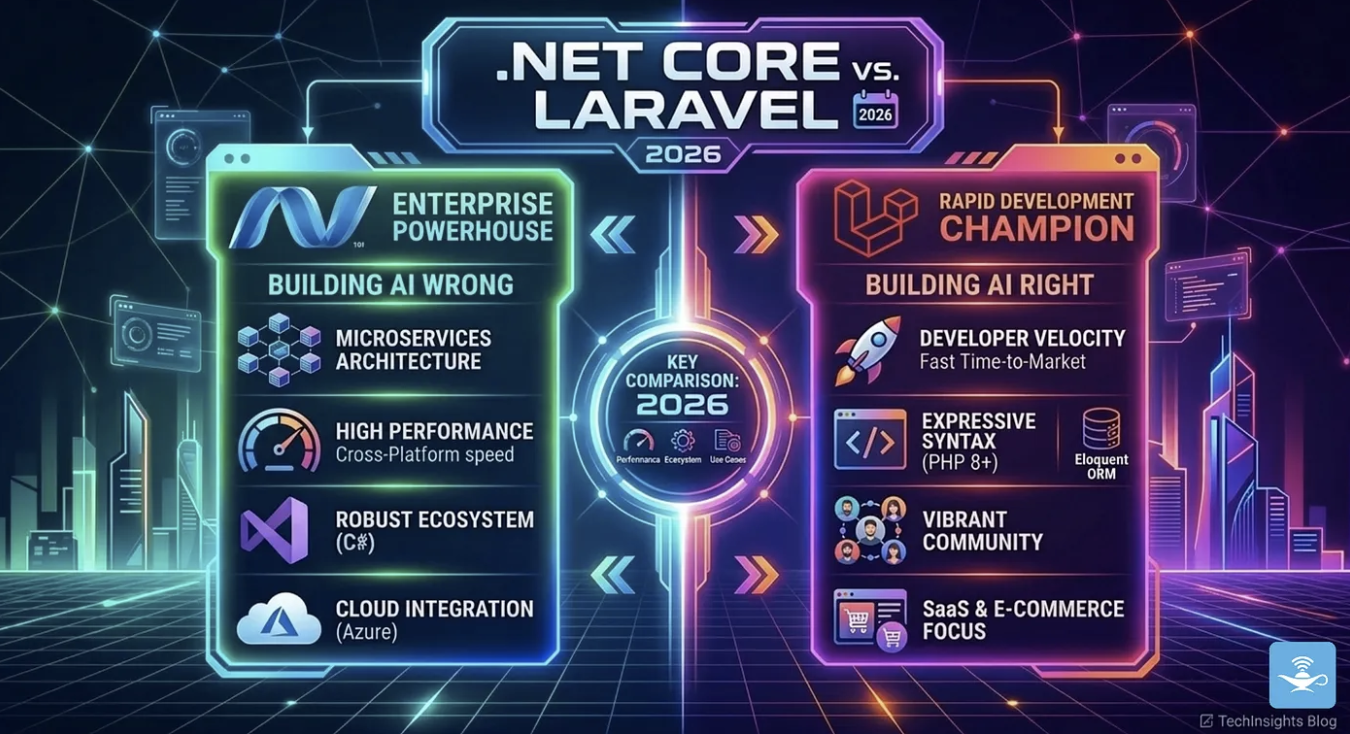

.NET Core vs Laravel in 2026: We Use Both, Here’s How We Decide

We build on both .NET Core and Laravel in production. Our logistics platform (2,000+ stops/day) runs on .NET. Our invoicing product runs on Laravel. This isn't about which is better it's the decision framework we actually use, with real benchmarks, real costs, and when to pick which.